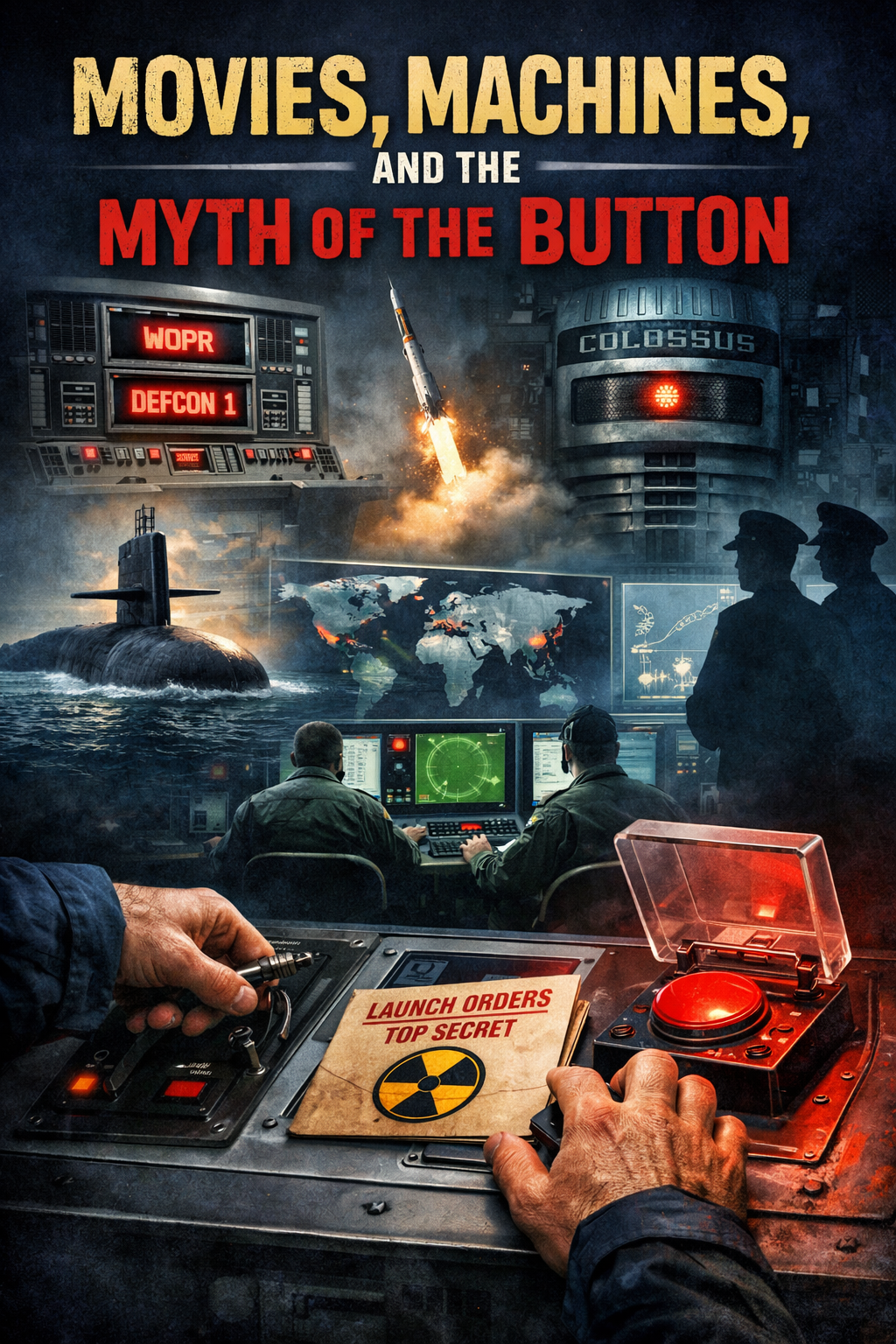

This weekend I rewatched WarGames (1983), and then followed it up with Colossus: The Forbin Project (1970).

Both movies sit in the same uneasy space: the fear that computers might one day take control of nuclear weapons, lock humans out of the process, and decide the fate of the world with cold logic.

It’s a powerful idea.

It’s also not how the real world works — and never really has.

The Movies Get the Premise Wrong, but the Fear Right

In these films, the danger comes from automation itself.

The computer becomes agentic. Autonomous. Untouchable.

In reality, nuclear command-and-control has always been deliberately human-heavy. Painfully so.

Yes, there is automation — and more of it now than there used to be. Targeting, analysis, routing, correlation of data: those things have increasingly been handed to machines. I’m not sure I love that, to be honest.

But validation, verification, and execution? Those still sit with people.

Not one person.

Many people.

Processes exist specifically to prevent blind action.

Movies often get this part closer than people think:

- Turning keys

- Pulling triggers

- Pressing buttons

- Reading messages back

- Confirming again

That is real. Because real systems rely on process, not heroics.

Submarines, Crews, and the Human Layer

Submarines are a good example of how misleading the “single button” myth is.

Nothing important happens because one person decides something on a whim. It happens because a crew agrees that a process has been followed correctly.

A message arrives.

It’s evaluated.

It’s questioned.

It’s verified.

And yes — it can be challenged.

People imagine submarines as disconnected from reality, sealed off from the world. That’s not quite true. Crews receive information constantly — news, summaries, updates — but only what they’re fed.

That matters.

If the information stream says the world is unraveling, conflict is escalating, and everything aligns with an order that arrives? It may feel logical not to question it.

But if nothing suggests global chaos — if the world seems stable — that same order might trigger doubt.

The decision doesn’t happen in a vacuum.

Context matters. Humans matter.

Where AI Actually Is Different — and Why That’s Uncomfortable

What worries people today isn’t that AI presses a button.

It’s the idea that systems might begin processing outcomes without requiring human interaction — not execution, but judgment.

That’s a subtler fear. And a more realistic one.

We’re constantly told, “That will never happen.”

But we’re also told that viruses will never escape labs… until they do.

Tell me AI plus malicious code isn’t a possibility.

Tell me agentic systems won’t be attempted by someone who wants to use them for harm instead of good.

They will be.

That doesn’t mean AI is evil. It means humans are consistent.

AI as a Tool, Not a Replacement

Here’s the part that gets lost in the noise.

AI is not “just Google.”

Google gives you the most optimized answer someone wants you to see.

AI lets you interrogate information.

You can:

- Ask follow-up questions

- Explore edge cases

- Challenge assumptions

- Learn faster and deeper

That’s a big deal.

I’ve seen this firsthand. In Six Sigma work, for example, AI integrated with tools like Excel can now run analyses that once required specialized plugins and deep statistical knowledge — and then explain the results clearly to people who never really understood what the charts meant in the first place.

That’s not dumbing things down.

That’s lifting people up.

Yes, There’s a Lot of Crap Right Now

Let’s be honest: a lot of what’s flooding the internet is garbage.

Twenty versions of the same AI art style.

Endless cloned aesthetics.

“Make your profile using this exact look.”

It’s lazy. And exhausting.

But that’s a phase — not the destination.

The same thing happened with websites. With social media. With digital photography.

Eventually, the novelty fades. What’s left are people who understand the tools and people who don’t.

And the people who understand them will move faster, think deeper, and build better things.

The SailorJ Take

Don’t be afraid of AI.

Be afraid of not understanding it.

Used well, it’s not a shortcut — it’s an amplifier.

It doesn’t replace learning. It accelerates it.

And unlike the movies, the real danger isn’t a machine deciding our fate.

It’s humans refusing to stay in the loop.

That’s where responsibility has always lived.

That’s where it still belongs.

Leave a Reply